Introduction

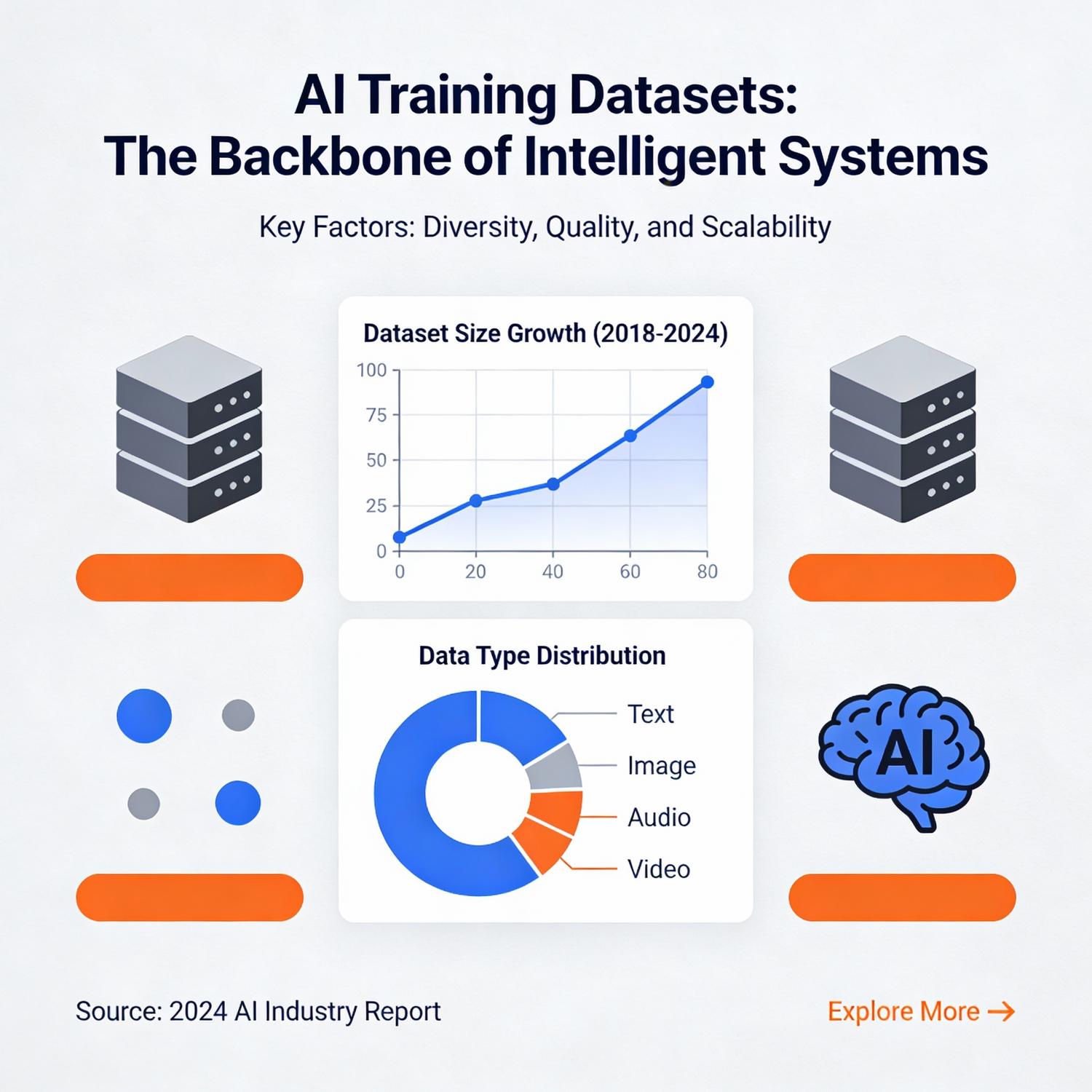

The foundation of modern artificial intelligence systems rests on two critical pillars that determine their effectiveness and capability. The quality and diversity of AI training datasets directly influence how well machine learning models understand patterns, make predictions, and generate outputs. Simultaneously, PromptEngineering has emerged as an essential discipline for maximizing the potential of these systems, enabling users to communicate effectively with language models and extract precise, valuable responses. Together, these elements shape the performance boundaries of contemporary AI applications across industries.

Organizations investing in machine learning infrastructure quickly discover that raw computational power alone cannot guarantee success. The careful curation of training data, combined with sophisticated techniques for querying models, creates the difference between mediocre results and transformative capabilities. As businesses increasingly rely on intelligent systems for decision-making, content generation, and automation, understanding these foundational components becomes imperative for technical leaders and practitioners alike.

Core Analysis

The relationship between AI training datasets and PromptEngineering represents a symbiotic dynamic where each element amplifies the other’s effectiveness. High-quality training data provides models with comprehensive knowledge bases, while refined query techniques unlock that knowledge through precise instructions. This interplay determines whether an AI system delivers generic outputs or contextually relevant, nuanced responses tailored to specific requirements.

Data quality encompasses several dimensions beyond mere volume. Diversity ensures models encounter varied examples across demographics, contexts, and edge cases. Accuracy prevents the propagation of misinformation through learned patterns. Recency keeps models aligned with current information and cultural contexts. Representativeness addresses bias concerns by including balanced perspectives across different populations and scenarios.

The architecture of modern language models reflects careful decisions about what information to include during the learning phase. Researchers must balance breadth against depth, general knowledge against specialized expertise, and historical data against contemporary relevance. These trade-offs manifest in model behavior, influencing everything from factual accuracy to stylistic tendencies.

Query formulation techniques have evolved from simple commands to sophisticated frameworks incorporating context, constraints, role assignments, and output specifications. Practitioners now employ strategies like few-shot learning, chain-of-thought reasoning, and structured templates to guide model responses. The effectiveness of these approaches depends heavily on understanding how models process information and generate text.

Temperature settings, token limits, and sampling methods provide additional control mechanisms that fine-tune output characteristics. Lower temperature values produce more deterministic, focused responses, while higher settings encourage creativity and variation. These parameters work in concert with instruction design to shape the final output according to specific use case requirements.

The feedback loop between training data and query optimization continues throughout a model’s lifecycle. User interactions reveal gaps in knowledge coverage, ambiguities in instruction interpretation, and opportunities for improved response quality. Organizations committed to maintaining competitive AI systems must invest in both data pipeline refinement and query technique development.

Use Cases & Applications

Enterprise content generation represents one of the most widespread applications, where businesses leverage intelligent systems to produce marketing copy, technical documentation, and customer communications at scale. Financial institutions employ these technologies for risk assessment, fraud detection, and market analysis by processing vast datasets of transaction histories and economic indicators.

Healthcare organizations utilize machine learning for diagnostic support, treatment recommendations, and drug discovery research. Models trained on medical literature, clinical trials, and patient records assist practitioners in identifying patterns that might escape human observation. Legal firms apply similar approaches to contract analysis, case law research, and document review processes.

Customer service automation has transformed through conversational interfaces that understand context, sentiment, and intent. These systems handle routine inquiries, escalate complex issues appropriately, and maintain consistent brand voice across interactions. E-commerce platforms deploy recommendation engines that analyze purchase histories, browsing behaviors, and demographic information to personalize shopping experiences.

Software development teams integrate code generation and debugging assistance into their workflows, accelerating development cycles and reducing error rates. Educational institutions create adaptive learning systems that adjust content difficulty and pacing based on individual student performance and learning styles.

Challenges & Limitations

Data bias remains a persistent concern, as training information inevitably reflects the perspectives, prejudices, and blind spots of its creators and sources. Models learn and perpetuate these biases unless deliberate intervention occurs during curation and evaluation phases. Addressing this challenge requires ongoing auditing, diverse data sourcing, and careful consideration of representation across protected categories.

Privacy considerations complicate data collection efforts, particularly when training requires sensitive personal information. Regulatory frameworks like GDPR and CCPA impose strict requirements on data handling, storage, and usage rights. Organizations must balance the appetite for comprehensive training data against legal obligations and ethical responsibilities to individuals.

Computational costs associated with training large-scale models create barriers to entry for smaller organizations and researchers. The environmental impact of energy-intensive training runs raises sustainability questions about the long-term viability of current approaches. Alternative architectures and training methodologies seek to reduce these resource requirements without sacrificing performance.

Model hallucination—the generation of plausible but factually incorrect information—poses risks in high-stakes applications. Current systems lack robust mechanisms for uncertainty quantification, making it difficult to distinguish confident correct responses from confident incorrect ones. Users must implement verification procedures and maintain appropriate skepticism when deploying these tools.

Context window limitations restrict the amount of information models can consider simultaneously, creating challenges for tasks requiring extensive background knowledge or long-form analysis. While recent architectures have expanded these windows significantly, fundamental constraints remain on processing capacity and attention mechanisms.

Future Outlook

Multimodal learning approaches that integrate text, images, audio, and video promise more comprehensive understanding capabilities. Models that process information across sensory modalities can develop richer contextual awareness and handle more complex real-world scenarios. This evolution will enable applications previously constrained by single-modality limitations.

Federated learning techniques offer potential solutions to privacy concerns by training models on distributed data without centralizing sensitive information. Organizations can contribute to collective intelligence while maintaining control over proprietary or personal data. This approach may accelerate adoption in regulated industries hesitant to share confidential information.

Specialized domain models tailored to specific industries or applications will likely proliferate as organizations seek competitive advantages through customization. Rather than relying solely on general-purpose systems, businesses will invest in fine-tuning and domain-specific training to address unique requirements and terminology.

Improved interpretability methods will help users understand why models produce particular outputs, building trust and enabling better debugging. Techniques that expose reasoning processes, highlight influential training examples, and quantify confidence levels will become standard features in production systems.

Regulatory frameworks will mature as governments and industry bodies establish standards for responsible development and deployment. Certification programs, audit requirements, and liability structures will create accountability mechanisms that shape how organizations approach implementation decisions.

Conclusion

The convergence of robust AI training datasets and sophisticated PromptEngineering techniques defines the current frontier of artificial intelligence capabilities. Organizations that master both dimensions position themselves to extract maximum value from their investments in machine learning infrastructure. Success requires ongoing commitment to data quality, continuous refinement of interaction methods, and vigilant attention to ethical considerations.

As these technologies mature, the gap between leading practitioners and followers will widen based on expertise in these foundational areas. Companies that treat data curation and query optimization as strategic competencies rather than technical afterthoughts will capture disproportionate benefits. The future belongs to organizations that recognize the symbiotic relationship between comprehensive training information and effective communication strategies, investing appropriately in both to unlock transformative potential across their operations.

Leave a Reply