Introduction

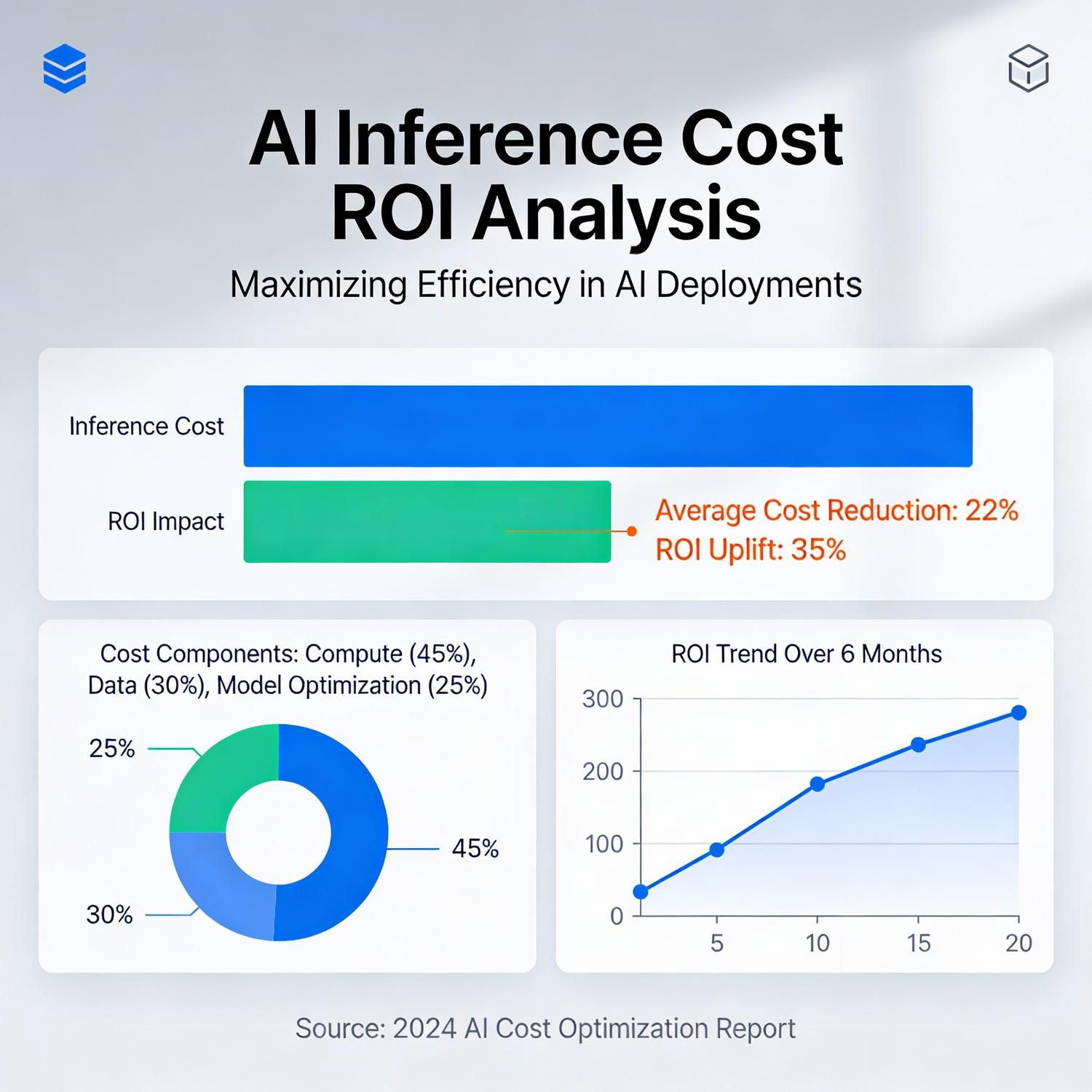

Organizations deploying artificial intelligence systems face mounting pressure to demonstrate tangible financial returns. As machine learning models become integral to business operations, understanding the relationship between AI inference cost ROI and CostOptimization has emerged as a critical priority for technology leaders. The economics of running inference workloads—where trained models generate predictions and insights—directly impacts profitability, scalability, and competitive positioning.

Modern enterprises process billions of inference requests daily, from recommendation engines to fraud detection systems. Each prediction carries computational expenses that accumulate rapidly at scale. Companies that master the balance between performance requirements and resource efficiency gain substantial advantages in their markets. Strategic investment in infrastructure, model architecture, and operational practices determines whether AI initiatives deliver sustainable value or become financial burdens.

Core Analysis

The financial dynamics of inference operations differ fundamentally from model training. While training represents a one-time or periodic investment, inference costs recur continuously as production systems serve real-world requests. This operational expense structure demands rigorous analysis of AI inference cost ROI and CostOptimization strategies to maintain healthy margins.

Hardware Selection and Utilization

Processing unit choices significantly influence expense structures. Graphics processing units excel at parallel operations but consume substantial power. Central processing units offer flexibility for smaller workloads. Specialized accelerators like tensor processing units deliver exceptional efficiency for specific model architectures. Organizations must evaluate throughput requirements, latency constraints, and utilization patterns when selecting infrastructure.

Maximizing hardware utilization prevents waste. Batch processing combines multiple requests to improve efficiency. Dynamic scaling adjusts capacity based on demand fluctuations. Multi-tenancy allows different workloads to share resources during off-peak periods. These techniques transform fixed infrastructure investments into variable costs aligned with business activity.

Model Architecture Optimization

Computational complexity directly correlates with operational expenses. Model compression techniques reduce resource requirements without sacrificing accuracy. Quantization converts high-precision weights to lower bit representations, decreasing memory bandwidth and storage needs. Pruning removes redundant parameters that contribute minimally to predictions. Knowledge distillation transfers capabilities from large models to smaller, more efficient variants.

Architecture choices impact long-term economics. Transformer models deliver exceptional accuracy but demand significant compute resources. Convolutional networks often provide better efficiency for vision tasks. Recurrent architectures suit sequential data with varying memory requirements. Selecting appropriate architectures for specific use cases prevents over-engineering and unnecessary expenditure.

Inference Serving Infrastructure

Deployment strategies affect both performance and costs. Edge computing processes data locally, reducing latency and bandwidth expenses. Cloud-based serving offers elastic scalability and managed infrastructure. Hybrid approaches balance control, performance, and flexibility based on workload characteristics.

Caching frequently requested predictions eliminates redundant computation. Request routing directs traffic to optimal resources based on model complexity and latency requirements. Load balancing distributes workload evenly across available capacity. These serving optimizations compound savings across millions of daily requests.

Use Cases & Applications

E-commerce Personalization

Retail platforms deploy recommendation systems that process customer behavior in real-time. Product suggestions, dynamic pricing, and inventory optimization rely on continuous inference operations. Companies optimize serving costs by caching popular recommendations, using lightweight models for initial filtering, and reserving complex models for high-value interactions. Revenue attribution demonstrates clear returns when recommendation accuracy drives conversion rates.

Financial Services

Banks and payment processors run fraud detection models that evaluate transactions within milliseconds. The cost of false negatives—allowing fraudulent transactions—far exceeds inference expenses. However, efficient operations enable processing higher transaction volumes without proportional infrastructure growth. Model optimization reduces latency while maintaining detection accuracy, directly improving customer experience and operational margins.

Healthcare Diagnostics

Medical imaging analysis assists radiologists in detecting abnormalities. High-resolution images require substantial computational resources for accurate analysis. Specialized hardware accelerates processing while maintaining diagnostic precision. The value proposition extends beyond direct cost savings to include improved patient outcomes, reduced diagnostic time, and enhanced clinician productivity.

Autonomous Systems

Self-driving vehicles and robotics applications demand real-time inference with strict latency requirements. Edge deployment minimizes response times while reducing cloud connectivity dependencies. Power-efficient models extend battery life in mobile applications. Safety-critical systems justify premium infrastructure investments, but optimization still delivers competitive advantages through extended operational range and reduced cooling requirements.

Challenges & Limitations

Performance-Cost Tradeoffs

Aggressive optimization may degrade model accuracy or increase latency. Finding the optimal balance requires extensive experimentation and domain expertise. Different use cases tolerate varying levels of performance degradation. Customer-facing applications demand consistent quality, while internal analytics workflows accept greater flexibility. Organizations struggle to establish appropriate thresholds without comprehensive testing frameworks.

Measurement Complexity

Attributing business outcomes to specific inference operations challenges financial analysis. Revenue impact depends on multiple factors beyond model predictions. Customer lifetime value, market conditions, and competitive dynamics influence results. Establishing causality requires controlled experiments and statistical rigor that many organizations lack. Incomplete measurement frameworks obscure true returns and hinder optimization efforts.

Technical Debt and Legacy Systems

Existing infrastructure constraints limit optimization opportunities. Legacy applications may lack instrumentation for detailed cost tracking. Monolithic architectures prevent granular resource allocation. Migration to modern platforms requires significant engineering investment with uncertain payback periods. Organizations balance incremental improvements against wholesale system redesigns.

Skill Gaps

Optimizing inference operations demands expertise spanning machine learning, systems engineering, and financial analysis. Talent shortages force companies to prioritize between model development and operational efficiency. Training existing staff requires time and resources. External consultants provide temporary solutions but don’t build internal capabilities. This skills deficit slows optimization initiatives across industries.

Dynamic Workload Patterns

Traffic fluctuations complicate capacity planning. Seasonal variations, viral content, and market events create unpredictable demand spikes. Over-provisioning wastes resources during quiet periods. Under-provisioning degrades user experience during peak times. Predictive scaling helps but imperfect forecasts still result in suboptimal resource allocation.

Future Outlook

Hardware Innovation

Next-generation accelerators promise order-of-magnitude efficiency improvements. Photonic computing leverages light for ultra-low-power operations. Neuromorphic chips mimic biological neural networks for specific workloads. These emerging technologies will reshape economic calculations, enabling previously impractical applications while reducing costs for existing use cases.

Automated Optimization

Machine learning systems increasingly optimize their own operations. Neural architecture search discovers efficient model designs automatically. Reinforcement learning tunes serving parameters in response to changing conditions. These meta-learning approaches reduce manual optimization effort while achieving superior results. Democratization of optimization tools will level competitive advantages currently held by resource-rich organizations.

Standardization and Benchmarking

Industry-wide performance standards enable meaningful cost comparisons across vendors and approaches. Open benchmarks like MLPerf establish common evaluation frameworks. Standardized metrics facilitate informed infrastructure decisions. Increased transparency drives competitive pressure for efficiency improvements across the ecosystem.

Sustainable Computing

Environmental considerations increasingly influence infrastructure decisions. Energy-efficient operations reduce both costs and carbon footprints. Renewable energy integration, waste heat recovery, and power-aware scheduling align economic and environmental objectives. Regulatory pressure and corporate sustainability commitments accelerate adoption of green computing practices.

Serverless and Managed Services

Cloud providers offer increasingly sophisticated managed inference platforms. Pay-per-request pricing eliminates idle capacity costs. Automatic optimization applies best practices without manual configuration. These services lower barriers to entry while providing enterprise-grade efficiency. However, vendor lock-in and reduced customization represent tradeoffs organizations must evaluate.

Conclusion

The financial viability of artificial intelligence initiatives depends critically on managing operational expenses at scale. Organizations that systematically address AI inference cost ROI and CostOptimization gain sustainable competitive advantages through improved margins, faster innovation cycles, and enhanced customer experiences. Success requires balancing technical performance with economic efficiency across hardware selection, model architecture, and serving infrastructure.

Strategic approaches combine multiple optimization techniques rather than relying on single solutions. Continuous measurement and experimentation identify opportunities specific to each organization’s workload patterns and business objectives. As the technology landscape evolves, maintaining operational excellence demands ongoing investment in skills development, infrastructure modernization, and process refinement. Companies that embed economic discipline into their machine learning operations position themselves to capture the full value of artificial intelligence while avoiding the pitfalls of unsustainable cost structures.

Leave a Reply